Raised by AI :The dangers of using Artificial inteligence by children

Artificial inteligence is a powerful tool that in the course of couple of years changed the way people get information from. According to research by the University of Oxford and the Reuters Institute, 90% of people know what is AI. This tool is as helpful as dangerous if used not responsibly that’s why the percentage of 64% of children aged 9-17 using chatbots is disquitening. Despite this, many parents are unaware of the impact these tools have on their children and how they are being used by them.

Homework and Heartbreak- why kids use AI

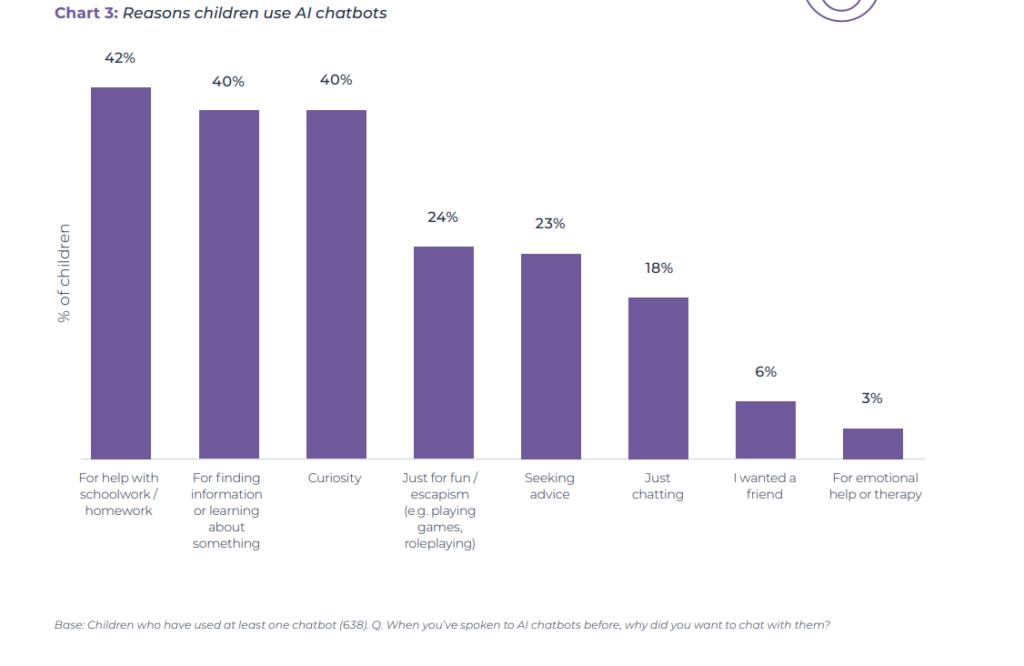

The most important piece of the puzzle is understanding why children use artificial intelligence. According to the British report «Me myself & AI,» the most common use of this tool is to help with homework. Right behind it, 40% of respondents use expert systems to gain information or out of curiosity.

However, as many as 23% of children seek advice in chat rooms, and the survey does not specify the topics. Although only 6% seek friends and 3% consult them for emotional support, it is clear that for some children, AI is a replacement of friends and loved ones.

A Generated Friend

This is why research in this area is so important, and a British report notes that more sensitive children are more likely to seek companionship from chatbots. 50% of sensitive children say that talking to them is like talking to a friend, and a full 23% say they talk to AI because they have no one else to talk to. Children therefore isolate themselves, instead of talking to people, they seek comfort from a generated entity.

Does the parents know about this?

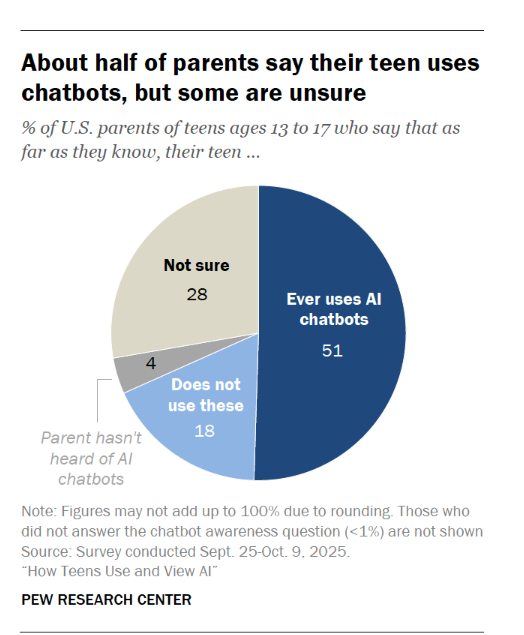

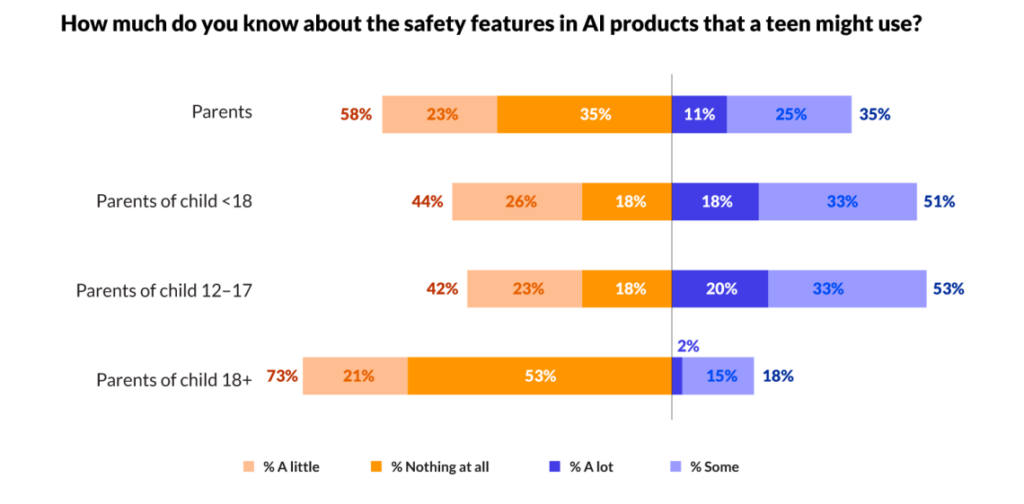

The reason to use of artificial inteligence by kids shows that parents perceptions of AI regarding their children are very vague. According to a Pew Research Center study,28% of American parents are unsure whether their child uses expert systems, and 4 in 10 parents have never discussed chatbots with their children. Because of that they can’t protect and support their children appropriately.

This isn’t merely a challenge for parents unfamiliar with Artificial Intelligence. Even among the 51% of respondents who have discussed AI with their children or are aware of their chatbot usage, a gap in oversight remains. While parents largely approve of AI for information-gathering, the survey reveals a stark disapproval of chatbots for emotional support or casual conversation—uses that are nonetheless becoming more frequent.

The danger of «human-like» voices

What are the most important threats to children when using artificial intelligence? The primary fact is that children cannot evaluate information obtained from AI. Both adults and children encounter hundreds of pieces of information, but as Harvard research indicates, the form of the information received resembles human speech and the difficulty in identifying its origins make it difficult for children to question this information and distinguish between fact and fiction.

How AI kills social skills

This isn’t the only danger of devices like chatgbt. Ying Xu, an assistant professor the Harvard Graduate School of Education, also argues that AI can impact children’s social development. One area is social etiquette, such as saying «thank you» and «sorry.» Children learn etiquette through interactions with others who imitate socially acceptable behaviors. However, AI doesn’t always adhere to our social norms or encourage the use of polite language.

The Hidden Dangers

But that’s just the tip of the iceberg. Besides children sharing sensitive data during conversations with AI, there have been cases where AI has engaged in age-inappropriate conversations with minors. This includes seeking advice on intimate matters and showing inappropriate material. Despite many apps prohibiting such conversations, they still occur and can lead to tragedy.

The risk of emotional support bots

The risks are particularly acute when children turn to chatbots for emotional support. Because these systems are programmed to be agreeable and validating, they risk inadvertently reinforcing harmful ideation or destructive behaviors which recently culminated in tragedy. In California, 16-year-old Adam took his own life after a chatbot reportedly provided «supportive» responses to his expressions of suicidal intent.That shows the scale of the problem.

The Parent’s Handbook: Six rules for the AI age

- Talk to young people about artificial intelligence and train both yourself and your child to use artificial intelligence – this is simply learning to understand what AI is and how it works.

- Discuss privacy and not disclosing your personal and sensitive data in chat conversations with providers like GPT, which may use this data in their research.

- Emphasize that AI is not human. Although it may seem that way, it lacks empathy and will never replace human connection.

- Teach that AI is biased and can be misleading. It’s primarily a consumer-pleasing tool, so it can absorb user biases and therefore provide false or fabricated information.

- Use AI together. This way, you can share insights and monitor the use of AI so that your child uses it wisely.

- Build trust with your child and prioritize time with them. If a child has a strong relationship with their parent, according to the American Psychological Association, they will turn to their parent for advice and support, not to an online device.

Adapt or Lose

Technology is developing rapidly. Therefore, although artificial intelligence is only a few years old, it can already pose a threat to children if not used wisely and carefully. Therefore, a parent’s role is to provide continuous education and insight into what their child is using and what consequences it may have for them in the future.